GKE Wireguard VPC Peering

I'd read many blogs on how to connect a GKE cluster with Wireguard VPN (bidirectional connectivity) over a private network. And it turned out to be a fairly niche usecase, such that the documentation to follow the steps were not very clear, and hence this is my attempt to make it better for anyone who encounters this.

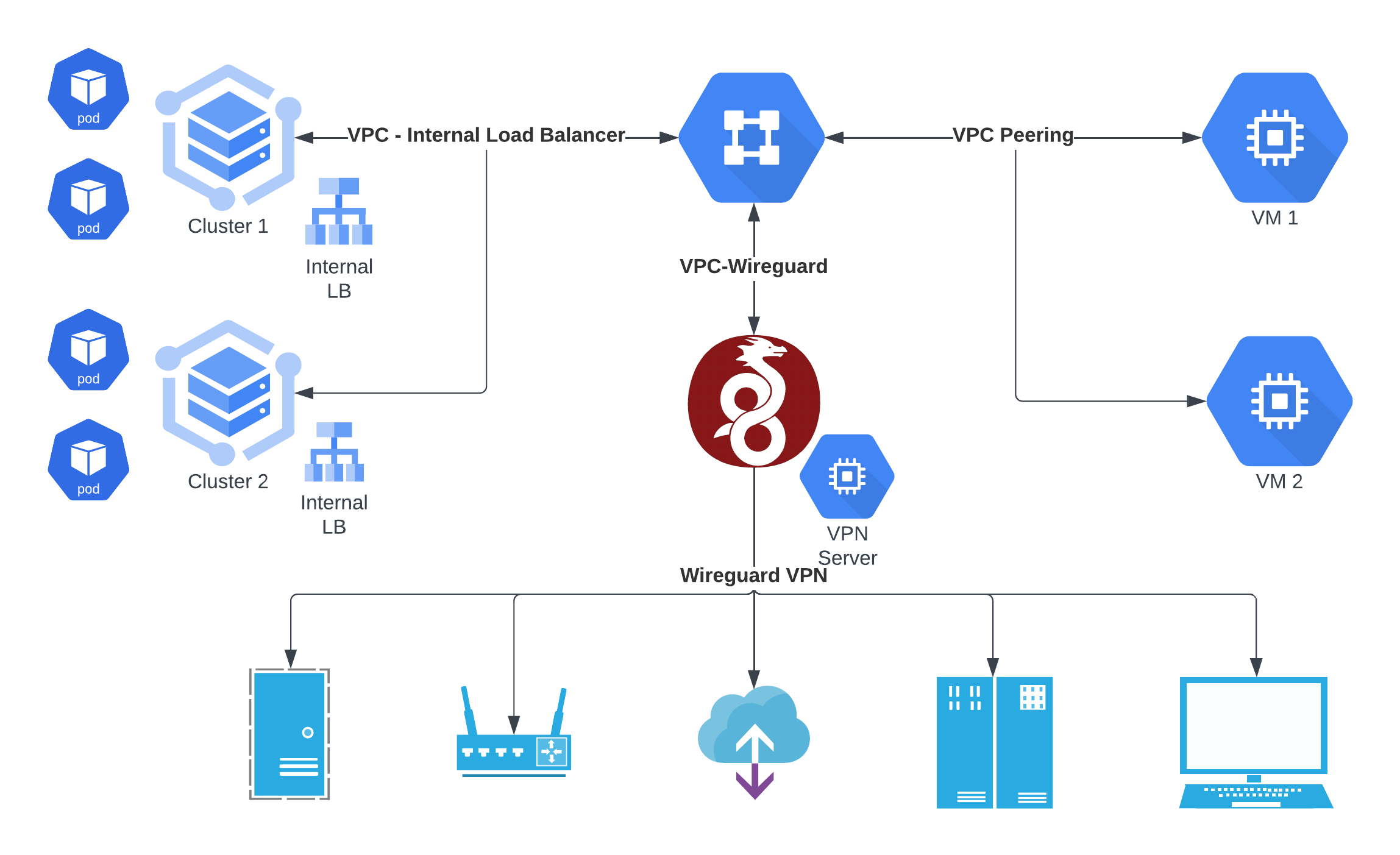

Firstly, your goto references should be the GCP Documentation and this interesting post. And here is a diagram of basically what I wanted to do:

I want our services to interact amongst 3 network planes.

- Device Services exposed on Wireguard VPN Nodes

- Kubernetes Services exposed on Internal Load Balancer

- VM Services exposed on internal network interfaces of VMs

To allow such interconnectivity, we've to configure VPC Peering on GCP (more details below) to allow pods to hit VPN nodes (for configuring the gateway) as well as VPN Nodes to hit Internal Load Balancers (via Envoy, to for eg push data to Kafka). To achieve this we've to do the following:

Wireguard VPN Setup

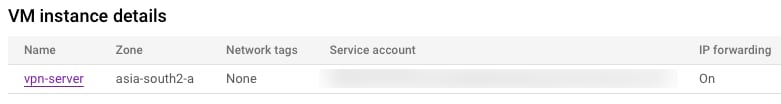

Create a standard VM and install Wireguard following a tutorial like this one.

While creating the VM, it is important to turn on IP Forwarding, otherwise it'll be cumbersome to do it later.

The important bit is to add bidirectional masquerading routes like following in wg0.conf or equivalent:

PostUp = iptables -A FORWARD -i wg0 -j ACCEPT; iptables -A FORWARD -o wg0 -j ACCEPT; iptables -t nat -A POSTROUTING -o wg0 -j MASQUERADE; iptables -A FORWARD -i ens4 -j ACCEPT; iptables -A FORWARD -o ens4 -j ACCEPT; iptables -t nat -A POSTROUTING -o ens4 -j MASQUERADE;

PostDown = iptables -D FORWARD -i wg0 -j ACCEPT; iptables -D FORWARD -o wg0 -j ACCEPT; iptables -t nat -D POSTROUTING -o wg0 -j MASQUERADE; iptables -D FORWARD -i ens4 -j ACCEPT; iptables -D FORWARD -o ens4 -j ACCEPT; iptables -t nat -D POSTROUTING -o ens4 -j MASQUERADE;Then in /etc/sysctl.conf uncomment the following line:

# Uncomment the next line to enable packet forwarding for IPv4

net.ipv4.ip_forward=1

Then run sysctl -p as root to apply the changes.

Via the above actions, we've configured that any packets on VPN interface can be routed to the GCP Internal network and vice versa.

GKE Setup

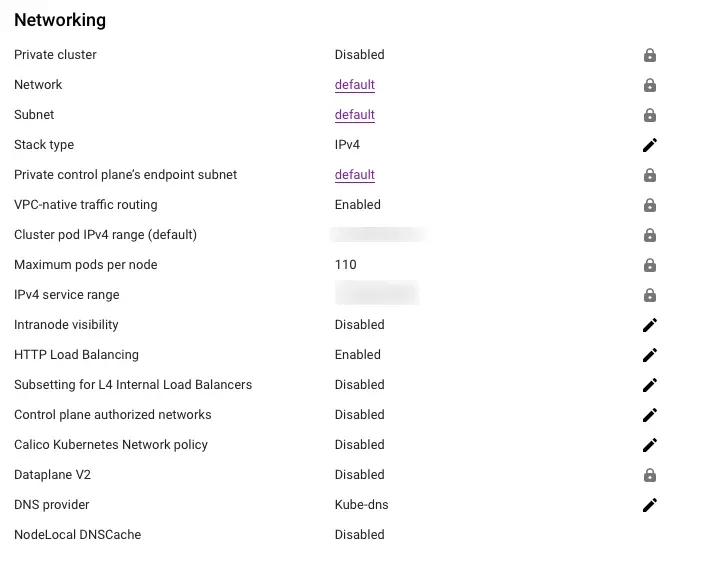

Ensure that GKE Network panel looks like this.

As you can see from above, take note of the above 2 networks as we'll need them later.

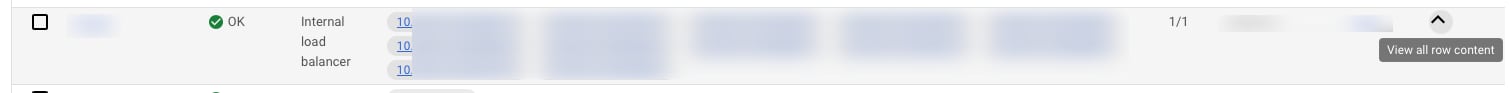

Then to expose K8s services inside the VPC, you need an Internal Load Balancer. Internal load balancer should show up in the console with the default VPC IP range.

What we've basically done is that we've created a cluster which is linked to the default GCP network, thus sharing the networking Infrastructure with Compute Machines. This is possible because we've created a Basic Cluster not Autopilot and thus share the VPC natively.

VPC Setup

To consume other services inside the VPC and GKE itself, you need to add 2 things:

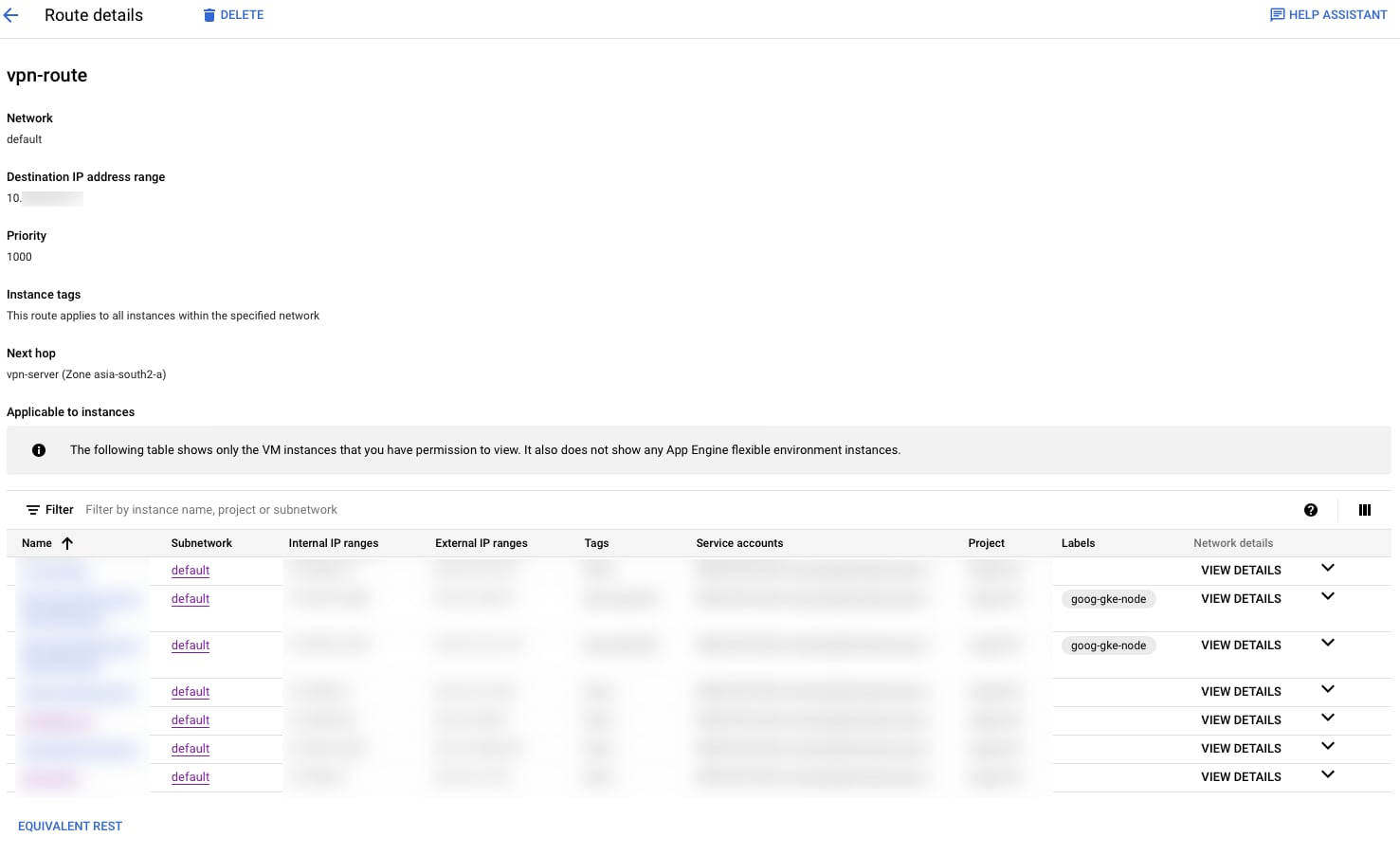

Route

Add a new route inside the VPC such that VPN network traffic is routed to the VPN Server via Next Hop. Thus now VPC members are aware about the VPN traffic and know where to send the traffic to.

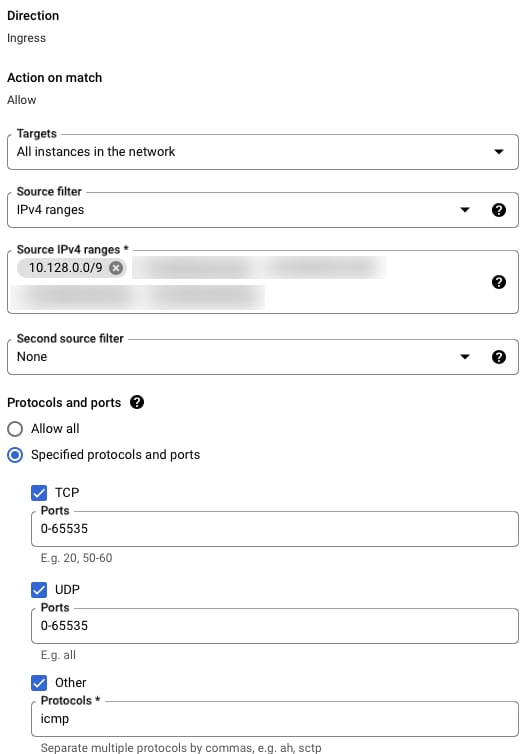

Firewall

Modify the firewall rule default-allow-internal and add the new IP ranges for VMs, VPN and K8s network allocations. This is required additionally as default ranges are tuned for only the default configuration and since we've added custom ranges from K8s and Wireguard, we need the platform security to be configured accordingly, as this is our trusted internal network.

With these changes, it should be possible to get the whole interconnecitivity working! Hope this helps you.